Controlling external devices and detecting mental intent using the brain-computer interface (BCI) technology has opened doors to improve the lives of patients suffering from various neurological disorders, including amyotrophic lateral sclerosis and spinal cord injury.

Using the same technology, the researchers from Carnegie Mellon University and the University of Minnesota have teamed up to make a breakthrough in the field of noninvasive robotic device control. They have developed the first-ever successful noninvasive mind-controlled robotic arm exhibiting the ability to continuously track and follow a computer cursor.

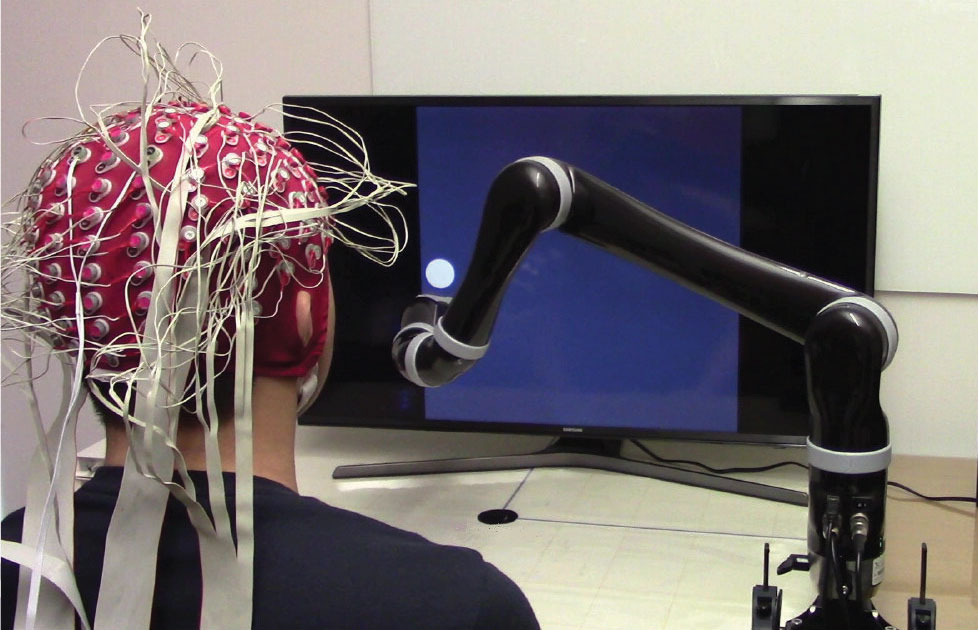

The person can control it using only pads attached on the outside of their head. The device may put us one step closer to a future in which we can all use our minds to control the tech around us.

BCIs have already shown success for controlling robotic devices using only the signals sensed from brain implants. Until now, however, these devices have been invasive, amount of medical and surgical expertise to correctly place them in a patient’s brain. Not to mention, potential risks and cost issues are also there. And hence, their use has been limited to a few clinical trials only.

One of the greatest obstacles in BCI research is to develop less invasive or even totally noninvasive technology that would allow paralyzed patients to control robotic arms using their mind. Now, with the new invention, it seems like researchers have overcome this boundary.

Using novel sensing and machine learning techniques, the new technology can measure brain signals with electrodes placed on the outside of the head to interpret someone’s intended movement. The brain-computer interface (BCI) reach signals deep within the brains of participants wearing EEG-based neural decoding head caps.

The sensor equipped device measures where the brain signals are to work out what movement they are trying to control. These signals are then fed to the computer, which creates its own signals to control the robotic arm.

Researchers said that they test the device on 68 people who did up to 10 sessions each. In tests, the control of the arm had become smooth and continuous. The team also claimed that their device was 500 percent more effective than previous attempts to do the same thing, while its artificial intelligence learning was 60 percent more effective.

Bin He, department head and professor of biomedical engineering at Carnegie Mellon University said that their technology could be directly applied to patients and they plan to do clinical trials soon.

He said, “This work represents an important step in noninvasive brain-computer interfaces, a technology which someday may become a pervasive assistive technology aiding everyone, like smartphones.“

“Despite technical challenges using noninvasive signals, we are fully committed to bringing this safe and economic technology to people who can benefit from it,” He added.

Their research was published in the journal Science Robotics.