MIT scientists have developed a new learning system that can potentially improve robots’ abilities to mold materials into target shapes and make predictions about interacting with solid objects and liquids. Also dubbed as a learning-based particle simulator, the system can give industrial robots a more refined touch.

In a paper being presented at the International Conference on Learning Representations in May, scientists describe a new model that learns to capture the interaction of small portions of different materials, when they’re poked and prodded. It analyzes the cases where the underlying physics of the movements are uncertain or unknown.

Robots can then use this model as a guide to predict how liquids, as well as rigid and deformable materials, will react to the force of its touch. As the robot handles the objects, the model also helps to further refine the robot’s control.

In experiments, a robotic hand with two fingers, called “RiceGrip,” accurately shaped a deformable foam to the desired configuration — such as a “T” shape. In other words, this model acts as an “intuitive physics” brain to robots to leverage to reconstruct three-dimensional objects in the same way as humans do.

First author Yunzhu Li, a graduate student in the Computer Science and Artificial Intelligence Laboratory (CSAIL) said, “Based on this intuitive model, humans can accomplish amazing manipulation tasks that are far beyond the reach of current robots. We want to build this type of intuitive model for robots to enable them to do what humans can do.”

Co-author Jiajun Wu, a CSAIL graduate student said, “When children are 5 months old, they already have different expectations for solids and liquids. That’s something we know at an early age, so maybe that’s something we should try to model for robots.”

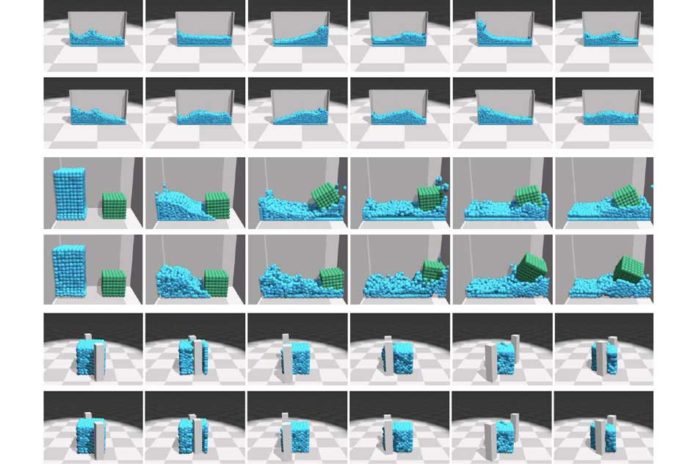

The “particle interaction network” (DPI-Nets) plays a vital role in this model. The network creates dynamic interaction graphs, which consist of thousands of nodes and edges that can capture complex behaviors of so-called particles. In the graphs, each node represents a particle. Neighboring nodes are connected with each other using directed edges, which represent the interaction passing from one particle to the other. In the simulator, particles are hundreds of small spheres combined to make up some liquid or a deformable object.

The graphs are constructed as the basis for a machine-learning system called a graph neural network. In training, the model over time learns how particles in different materials react and reshape. It does so by implicitly calculating various properties for each particle — such as its mass and elasticity — to predict if and where the particle will move in the graph when perturbed.

The model then leverages a “propagation” technique, which instantaneously spreads a signal throughout the graph. The researchers customized the technique for each type of material — rigid, deformable, and liquid — to shoot a signal that predicts particles positions at certain incremental time steps. At each step, it moves and reconnects particles, if needed.

Scientists demonstrate the model by tasking the two-fingered RiceGrip robot with clamping target shapes out of deformable foam. The robot primarily used a depth-sensing camera and object-recognition techniques to identify the foam.

As the robot starts indenting the foam, it iteratively matches the real-world position of the particles to the targeted position of the particles. Whenever the particles don’t align, it sends an error signal to the model.

Scientists are further planning to improve the model to help robots better predict interactions with partially observable scenarios, such as knowing how a pile of boxes will move when pushed, even if only the boxes at the surface are visible and most of the other boxes are hidden.

Wu said, “We’re extending our model to learn the dynamics of all particles, while only seeing a small portion.”