A new study by the MIT’s Picower Institute for Learning and Memory suggests that one crucial brain region called the posterior parietal cortex (PPC) plays an important role in converting vision into action.

Scientists pinpointed the exact role of the PPC in mice and showed that it contains a mix of neurons attuned to visual processing, decision-making, and action.

Senior author Mriganka Sur, the Paul E. and Lilah Newton Professor of Neuroscience in the Department of Brain and Cognitive Sciences said, “Vision in the service of action begins with the eyes, but then that information has to be transformed into motor commands.”

“The study may help to explain a particular problem in some people who have suffered brain injuries or stroke, called “hemispatial neglect.” In such cases, people are not able to act upon or even perceive objects on one side of their visual field. Their eyes and bodies are fine, but the brain just doesn’t produce the notion that there is something there to trigger action. Some studies have implicated damage to the PPC in such cases.”

To do the exploration, the group prepared mice on a simple assignment: If they saw a stripped example on the screen float upward, they could lick a nozzle for a fluid reward however in the event that they saw the stripes moving to the side, they ought not lick, keeping in mind that they get a severe liquid.

At times they would be presented to the same visual examples, yet the spout wouldn’t rise. Along these lines, the specialists could contrast the neurons’ reactions with the visual example with or without the potential for motor action.

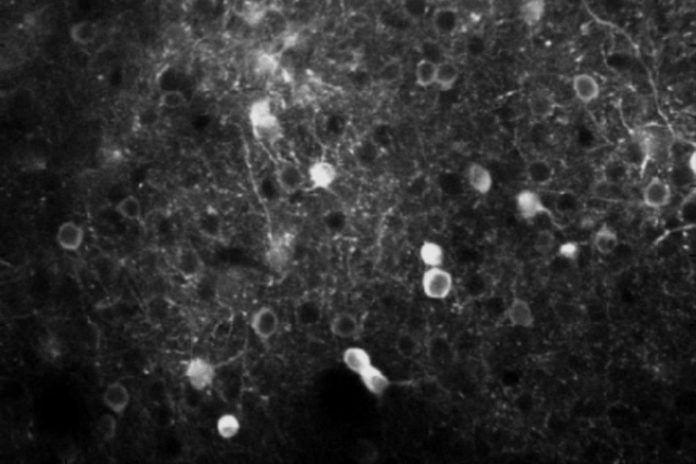

As the mice were seeing the visual examples and settling on choices whether to lick, the scientists were recording the action of several neurons in every one of two districts of their brain: the visual cortex, which forms locate, and the PPC, which gets input from the visual cortex, yet in addition input from numerous other sensory and motor regions. The cells in every locale were built to glean all the more brightly when they ended up dynamic, giving the researchers an indication of precisely when they ended up connected by the task.

Visual cortex neurons, obviously, basically lit up when the pattern showed up and moved, however, they were part about equally between reacting to one visual example or the other.

Neurons in the PPC demonstrated more changed reactions. Some acted like the visual cortex neurons, however, most (around 70 percent) were active based on whether the pattern was moving the right way for licking (upward) and only if the nozzle was available. In other words, most PPC neurons were selectively responsive not merely to seeing something, but to the rules of the task and the opportunity to act on the correct visual cue.

This proposes that instead of assuming a predefined part in sensory or motor processing, they can adaptably interface tactile and engine data to enable the mouse to react to their condition suitably.

Yet, even the occasional error was informative. Consider the situation when the spout was accessible and the stripes were moving sideways. All things considered, a mouse ought not to lick despite the fact that it could.

Visual cortex neurons carried on a similar route paying little respect to the mouse’s choice, yet PPC neurons were more dynamic just before a mouse licked by mistake, than just before a mouse didn’t lick. This recommended numerous PPC neurons are arranged toward acting.

Not yet completely persuaded that the PPC encoded the choice to lick in view of seeing the right stripe development, the scientists exchanged the guidelines of the undertaking. Presently, the nozzle would dribble out the reward after licking to the sideways stripe design and transmit the unpleasant fluid when the stripes climbed. At the end of the day, the mouse still observed similar things, however, what they implied for activity had turned around.

With similar mice re-prepared, the analysts at that point took a gander at similar neurons in similar districts. Visual cortex neurons didn’t change their movement by any stretch of the imagination. Those that took after the upward example or sideways example still did as previously. What the mice were seeing, all things considered hadn’t changed.

However, the neural responses in PPC changed along with the rules for action. Neurons that had been activated selectively for upward visual patterns now responded instead to sideways patterns. In other words, learning of the new rules was directly evident in the changed activity of neurons in the PPC.

The researchers, therefore, observed a direct correlate of learning at the cellular level, strongly implicating the PPC as a critical node for where seeing meets acting on that information.

Sur said, “If you flipped the rules of traffic lights so that red means go, the visual input would still be driven by the colors, but the linkage to motor output neurons would switch, and that happens in the PPC.”

“Our understanding of how decisions are computed and visuomotor transformations are made, will be greatly aided by future circuit-level analyses of PPC function in this powerful model system.”

The study is published in the journal Nature Communications.