Our brains’ neurons’ networks spontaneously arrange themselves to recognize the various information sources coming in. The foundation of all learning in the brain is a process that includes altering the strength of connections between neurons.

This kind of network self-organization follows the free energy principle’s mathematical laws, according to a new prediction made by Takuya Isomura at RIKEN CBS and his worldwide colleagues. In the new work, they grew neurons from rat embryonic brains in a culture plate on top of a grid of small electrodes to test this theory.

The principle correctly anticipated how real neural networks spontaneously remodel to discriminate incoming information and how changing neuronal excitability can interfere with the process. The findings thus have implications for building animal-like artificial intelligence and for understanding cases of impaired learning.

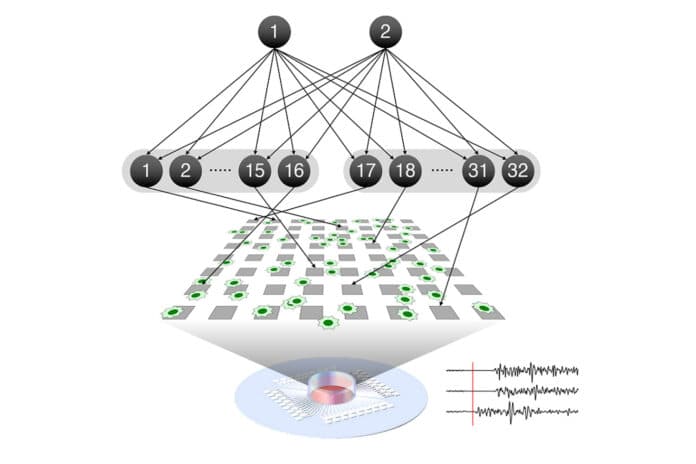

Once you can tell one sensation from another, like two voices, you will see that certain of your neurons will react to one voice while others will respond to the other. This is the outcome of learning, which is the reorganization of brain networks. In their culture experiment, the researchers recreated this procedure by stimulating the neurons to combine two different hidden sources utilizing the electrode grid underlying the neural network.

After 100 training sessions, the neurons became selective, with some responding strongly to source #1, weakly to source #2, and others responding in the opposite direction. When drugs that increased or decreased neuron excitability were introduced into the culture beforehand, they disturbed the learning process. This demonstrates that cultured neurons perform the same functions as neurons in the operating brain.

According to the free energy principle, this form of self-organization will always follow a pattern that minimizes the free energy in the system. The team used real brain data to reverse engineer a predictive model based on this concept to see if it is the guiding force behind neural network learning.

The data from the first 10 electrode training sessions were then fed into the model, which was used to anticipate the next 90 sessions. The model properly predicted cell responses and the level of connection between neurons at each phase. This means that knowing the initial state of the neurons is sufficient to predict how the network would change over time when learning occurs.

Isomura said, “Our results suggest that the free-energy principle is the self-organizing principle of biological neural networks. It predicted how learning occurred upon receiving particular sensory inputs and how it was disrupted by alterations in network excitability induced by drugs.”

“Although it will take some time, ultimately, our technique will allow modeling the circuit mechanisms of psychiatric disorders and the effects of drugs such as anxiolytics and psychedelics. Generic mechanisms for acquiring the predictive models can also be used to create next-generation artificial intelligence that learns as real neural networks do.”

Journal Reference:

- Isomura, T., Kotani, K., Jimbo, Y. et al. Experimental validation of the free-energy principle with in vitro neural networks. Nat Commun 14, 4547 (2023). DOI: 10.1038/s41467-023-40141-z