MIT scientists have developed a novel approach to analyzing time series data sets using a new algorithm, termed state-space multitaper time-frequency analysis (SS-MT). SS-MT gives a structure to dissect time arrangement information progressively, empowering analysts to work in a more educated manner with extensive arrangements of information that are nonstationary, i.e. at the point when their qualities develop after some time.

It is important to measure time while every task such as tracking brain activity in the operating room, seismic vibrations during an earthquake, or biodiversity in a single ecosystem over a million years. Measuring the recurrence of an event over some stretch of time is a major information investigation errand that yields basic knowledge in numerous logical fields.

This newly developed approach enables analysts to measure the moving properties of information as well as make formal factual correlations between discretionary sections of the information.

Emery Brown, the Edward Hood Taplin Professor of Medical Engineering and Computational Neuroscience said, “The algorithm functions similarly to the way a GPS calculates your route when driving. If you stray away from your predicted route, the GPS triggers the recalculation to incorporate the new information.”

“This allows you to use what you have already computed to get a more accurate estimate of what you’re about to calculate in the next time period. Current approaches to analyses of long, nonstationary time series ignore what you have already calculated in the previous interval leading to an enormous information loss.”

During the study, scientists combined the strengths of two existing statistical analysis paradigms: state-space modeling and multitaper methods. When combined, the two methods bring together the local optimality properties of the multitaper approach with the ability to combine information across intervals with the state-space framework to produce an analysis paradigm that provides increased frequency resolution, increased noise reduction, and formal statistical inference.

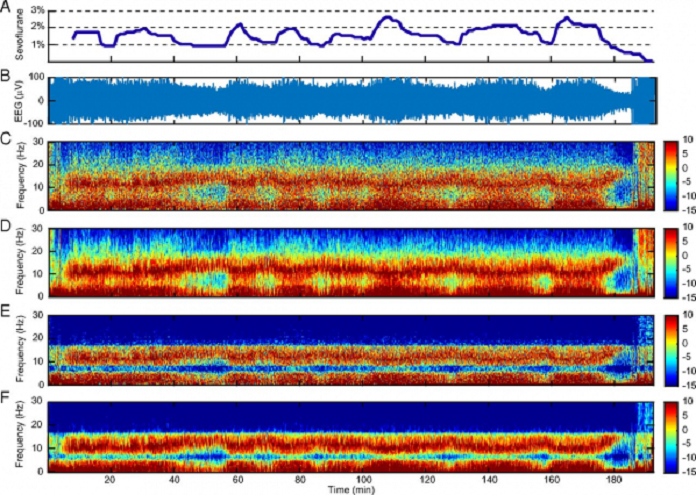

Then for testing, they primarily observed electroencephalogram (EEG) recordings measuring brain activity from patients receiving general anesthesia for surgery. The SS-MT calculation gave a very denoised spectrogram describing the adjustments in control crosswise over frequencies after some time. In a moment case, they utilized the SS-MT’s derivation worldview to analyze distinctive levels of obviousness as far as the distinctions in the otherworldly properties of these behavioral states.

Brown said, “It produces cleaner, sharper spectrograms. The more background noise we can remove from a spectrogram, the easier it is to carry out formal statistical analyses.”

“Spectrogram estimation is a standard analytic technique applied commonly to a number of problems such as analyzing solar variations, seismic activity, stock market activity, neuronal dynamics and many other types of time series. As the use of sensor and recording technologies becomes more prevalent, we will need better, more efficient ways to process data in real time. Therefore, we anticipate that the SS-MT algorithm could find many new areas of application.”

Moving forward, scientists are now planning use this method to investigate in detail the nature of the brain’s dynamics under general anesthesia.

The paper ‘State-space multitaper time-frequency analysis‘ published in PNAS.