Scientist at UNIST has developed deep learning-based artificial intelligence (AI) system that allows users to create applications and software for smartphones and personal computers. It also provides the best layouts through the assessment of graphical user interfaces (GUIs) of the mobile application.

Developing intuitive and easy to use applications is a meticulous process for users with no significant experience and guidance. This is especially because the visual arrangement of icons and texts turns out to be increasingly significant because of the idea of the mobile environment, for example, small screen size.

Professor Ko solved this issue with the use of deep-learning-based artificial intelligence (AI) and iterative design process. The new AI system is capable of studying the strengths and weaknesses of the existing GUI design patterns, evaluating the created GUIs for mobile apps, and suggesting alternatives.

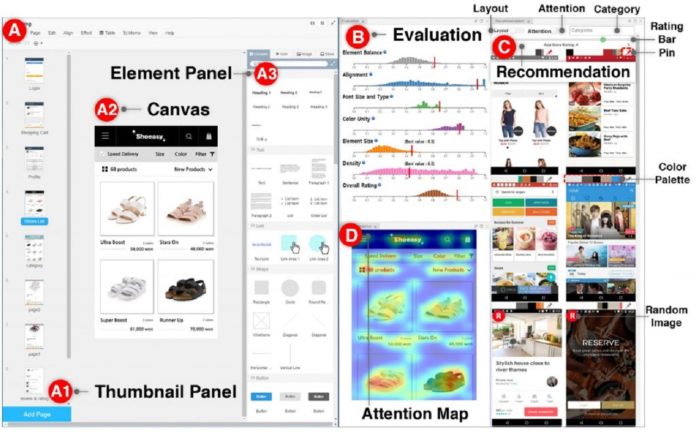

To this end, the scientists conducted semi-structured interviews, based on which they built a GUI prototyping assistance tool, called GUIComp by integrating three different types of feedback mechanisms: recommendation, evaluation, and attention.

According to the research team, this tool can be connected to GUI design software as an extension, and it provides real-time, multifaceted feedback on a user’s current design. Additionally, scientists conducted two user studies, in which they asked participants to create mobile GUIs with or without GUIComp, and requested online workers to assess the created GUIs.

The experimental results show that GUIComp facilitated the iterative design, and the participants with GUIComp had better user experience and produced more acceptable designs than those who did not.

Chunggi Lee, the first author of the study, said, “We applied deep learning techniques for the design recommendation process and eye-tracker calibration. In particular, we used the K-nearest neighbor Algorithm and Stacked Autoencoder (SAE) to provide real-time, multifaceted feedback on a user’s current design.”

“We have put much effort into a visualization to help resolve issues that users with no experience face during the designing process of user-friendly GUIs. By securing quality data, this is expected to be applied to the educational sector, such as web development and painting.”

Journal Reference:

- Chunggi Lee et al., GUIComp: A GUI Design Assistant with Real-Time, Multi-Faceted Feedback. arXiv:2001.05684