Understanding of movement is fundamental for every single living species, regardless of whether it’s making sense of the best angle for throwing a ball or seeing the movement of predators and prey. Yet, simple videos can’t really give us the full picture.

Image: Jason Dorfman/MIT CSAIL

As the videos and photos for studying motion are two-dimensional, they thus don’t show the underlying 3-D structure of the person or subject of interest. And without the full geometry, we can not examine the small and subtle movements that assist us to move faster or make sense the accuracy expected to idealize our athletic form.

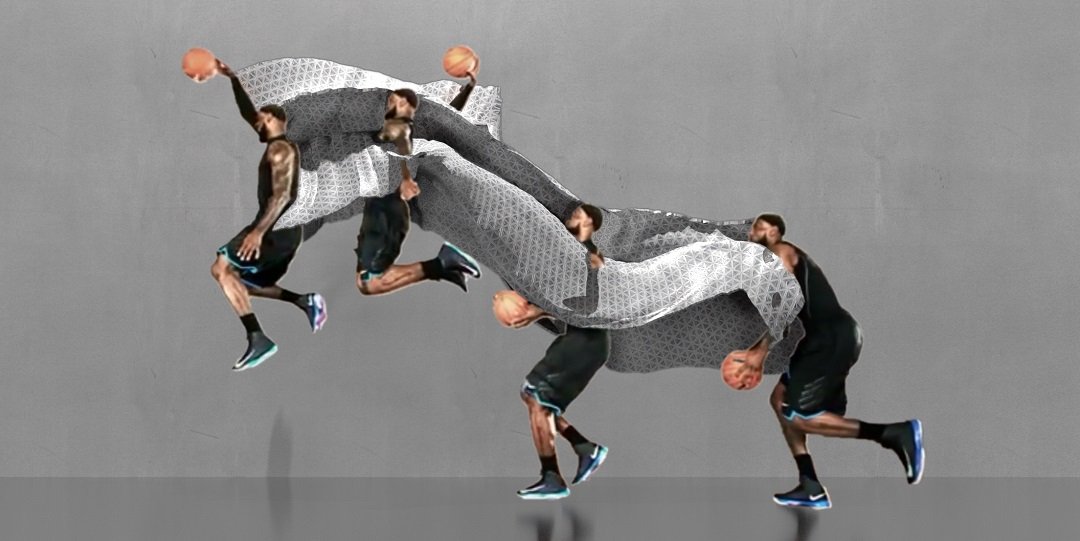

Now, MIT CSAIL scientists have come up with a new system to get a better handle on this understanding of complex motion. The system uses an algorithm that can take 2-D videos and turn them into 3-D-printed ‘motion sculptures’ that show how a human body moves through space.

Image courtesy of MIT CSAIL

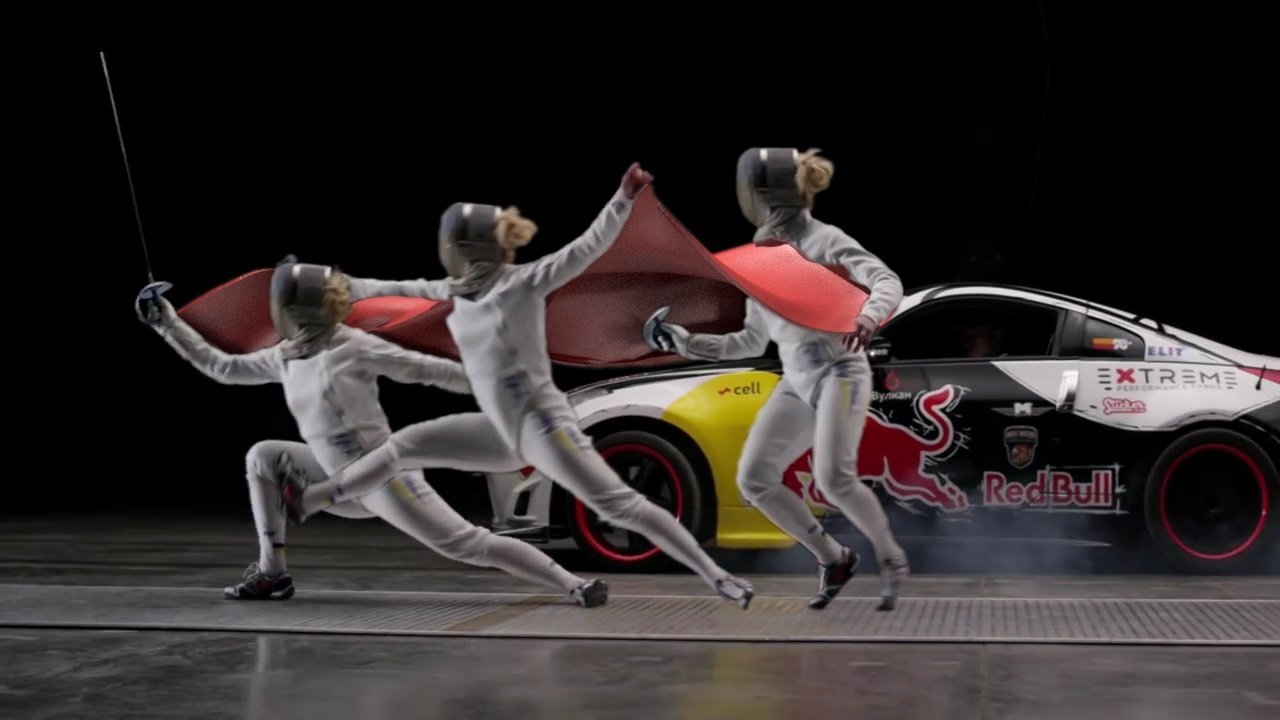

The system dubbed ‘MoSculp’ system could enable a significantly more detailed investigation of movement for proficient athletes, dancers, or any individual who needs to enhance their physical skills.

PhD student Xiuming Zhang said, “Imagine you have a video of Roger Federer serving a ball in a tennis match, and a video of yourself learning tennis. You could then build motion sculptures of both scenarios to compare them and more comprehensively study where you need to improve.”

What MoSculp needs is a video sequence. Given an input video, the system first consequently recognizes 2-D key points regarding the matter’s body, for example, the hip, knee, and ankle of a ballerina while she’s completing a mind-boggling move sequence. At that point, it takes the most ideal poses from those points to be transformed into 3-D “skeletons.”

Image courtesy of MIT CSAIL

In the wake of stitching these skeletons together, the system creates a moving sculpture that can be 3-D-printed, demonstrating the smooth, constant way of movement followed out by the subject. Clients can modify their figures to center around various body parts, allot distinctive materials to recognize among parts, and even customize lighting.

During trials, scientists found that over 75 percent of subjects felt that MoSculp provided a more detailed visualization for studying motion than the standard photography techniques.

The system works best for larger movements, like throwing a ball or taking a sweeping leap during a dance sequence. It also works for situations that might obstruct or complicate movement, such as if people are wearing loose clothing or carrying objects.

Currently, the system only uses single-person scenarios, but the team soon hopes to expand to multiple people. This could open up the potential to study things like social disorders, interpersonal interactions, and team dynamics.

Courtney Brigham, communications lead at Adobe, who was not involved in the research said, “Dance and highly skilled athletic motions often seem like ‘moving sculptures’ but they only create fleeting and ephemeral shapes. This work shows how to take motions and turn them into real sculptures with objective visualizations of movement, providing a way for athletes to analyze their movements for training, requiring no more equipment than a mobile camera and some computing time.”