Eye contact is an important source of communication. But today’s technology is not so far developed so that it can recognize eye contact. Focusing on it, scientists Saarland University along with Max Planck Institute for Informatics have developed a method by which it is possible to detect eye contact, independent of the type and size of the target object, the position of the camera, or the environment.

Previous systems do this by measuring gaze direction. But the system requires special eye tracking equipment that needed minutes-long calibration, and subjects had to wear a tracker.

True investigations, for example, in a person on foot zone, or even just with many people, were in the best case very complicated and in the worst case, impossible.

Andreas Bulling at Saarland University said, “Until now if you were to hang an advertising poster in a pedestrian zone, you could not determine how many people actually looked at it.”

This newly developed system involves machine learning with the camera at the target poster. Only glances at the camera itself could be recognized. Again and again, the contrast between the preparation information and the information in the objective condition was excessively incredible.

Bulling said, “It was restrictively hard to build up a widespread eye to eye connection identifier reasonable for both little and substantial target objects, in stationary and versatile circumstances, following one client or an entire gathering, or under changing lighting conditions.”

In addition to this system, scientists also built a method based on a new generation of algorithms for estimating gaze direction. The method involves a special type of deep learning neural network at the forefront of many areas of industry and business.

Scientists took almost two years to develop this approach step by step. In the present method, a so-called clustering (ball analysis) of the estimated viewing directions is first carried out. By using this, a user can sort the apples and pears on the basis of different characteristics, without having to explicitly specify.

In a second step, the system primarily identifies most probable cluster. The gaze direction estimations contained therein are used for the training of a sight-direction recognizer specific to the target object.

The main benefit of this system that it does not involve the user at all. The longer the camera remains next to the target object and records data.

Bulling said, “In this way, our method turns normal cameras into eye contact detectors, without the size or position of the target object having to be known or specified in advance.”

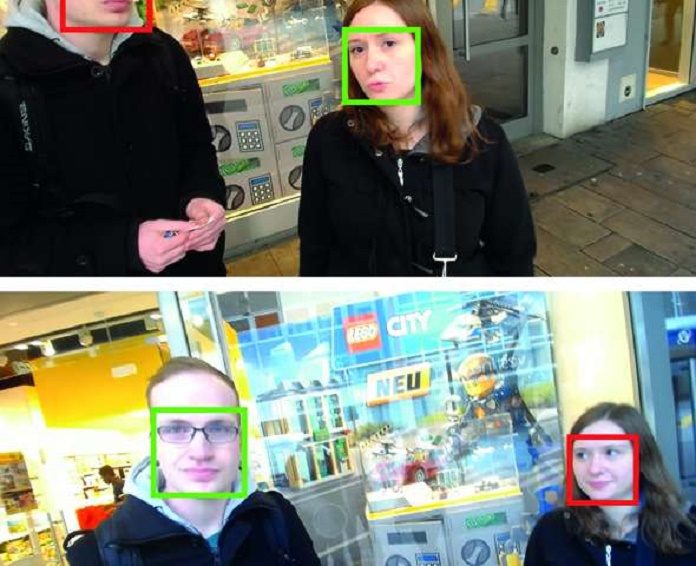

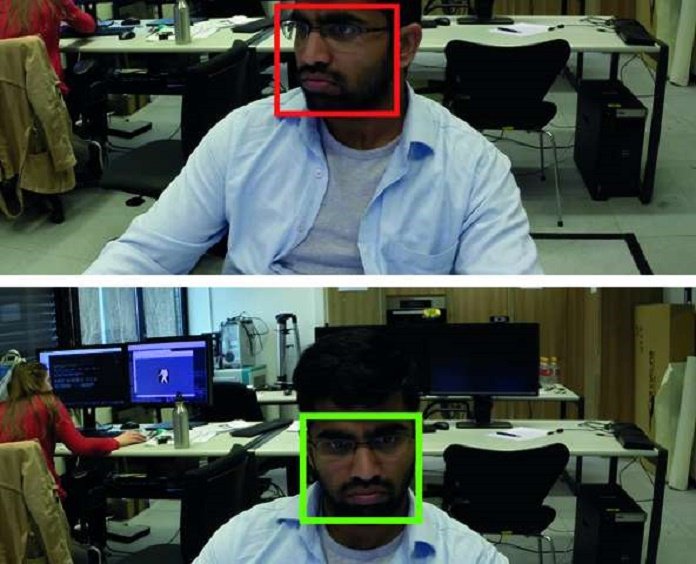

Scientists tested their method in 2 scenarios. 1. In a workplace and 2. In an everyday situation. In a workplace, the camera was mounted on the target object whereas, in an everyday situation, a user wore it as an on-body camera. As a result of this method, it is robust, even if the number of people, the light conditions, the camera positions and the target objects vary in their type and size.

Bulling said, “Although we can fundamentally identify gaze clusters on several target objects with only one camera, the assignment of these clusters to the different objects is not yet possible. Therefore, our method currently assumes that the closest cluster belongs to the target object and ignores the remaining clusters. We will go on with this limitation next.”

“Nevertheless, the method we present is a great step forward. It paves the way not only for new user interfaces that automatically recognize eye contact and react to it but also for measurements of eye contact in everyday situations, such as outdoor advertising, that were previously impossible.”

More Information:

- Xucong Zhang, Yusuke Sugano and Andreas Bulling. Everyday Eye Contact Detection Using Unsupervised Gaze Target Discovery. Proc. ACM UIST 2017.

- Xucong Zhang, Yusuke Sugano, and Mario Fritz and Andreas Bulling. Appearance-Based Gauze Estimation in the Wild. Proc. IEEE CVPR 2015, 4511-4520.

- Xucong Zhang, Yusuke Sugano, and Mario Fritz and Andreas Bulling. It’s Written All Over Your Face: Full-Face Appearance-Based Gaze Estimation. Proc. IEEE CVPRW 2017.