Filtering information for search engines, acting as an opponent during a board game or recognizing images, all these are now outpaced by artificial intelligence. Scientists are now showing how ideas from computer science could revolutionize brain research.

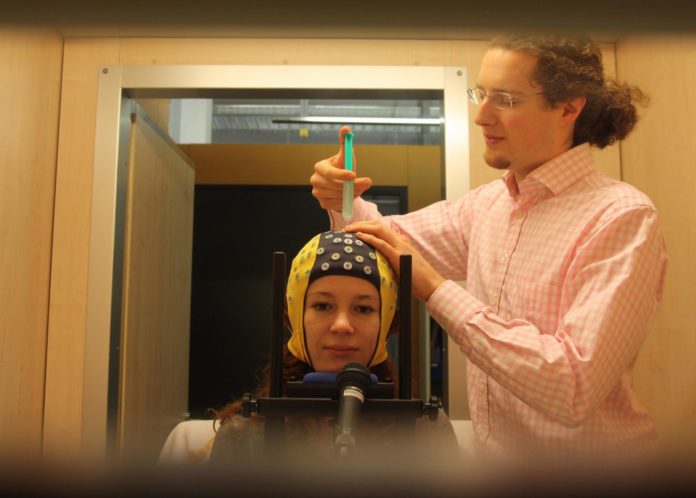

They showing how a self-learning algorithm decodes human brain signals that were measured by an electroencephalogram (EEG). It also involves performed movements along with hand and foot movements that merely thought or an imaginary rotation of objects.

As compared to other systems that solve certain tasks based on predetermined brain signal characteristics, the smart computers work as quickly and precisely. Additionally, there is increased demand for such diverse intersections between man and machine. It could be used for early detection of epileptic seizures, improving communication possibilities for severely paralyzed patients or an automatic neurological diagnosis.

Computer scientist Robin Tibor Schirrmeiste said, “Our software is based on brain-inspired models that have proven to be most helpful to decode various natural signals such as phonetic sounds.”

“The great thing about the program is we needn’t predetermine any characteristics. The information is processed layer for layer, that is in multiple steps with the help of a nonlinear function. The system learns to recognize and differentiate between certain behavioral patterns from various movements as it goes along.”

Scientists are now using it rewrite methods particularly for decoding EEG data. Originally, the smart computer is based on the connections between nerve cells in the human body. The electric signals from synapses are directed from cellular protuberances to the cell’s core and back again.

Schirrmeister said, “Theories have been in circulation for decades, but it wasn’t until the emergence of today’s computer processing power that the model has become feasible.”

Until now, it was difficult to define the network’s circuitry after the learning process had been completed. In addition, all algorithmic processes in the background are invisible.

Head investigator Tonio Ball said, “Unlike the old method, we are now able to go directly to the raw signals that the EEG records from the brain. Our system is as precise, if not better, than the old one.”

“Our vision for the future includes self-learning algorithms that can reliably and quickly recognize the user’s various intentions based on their brain signals. In addition, such algorithms could assist neurological diagnoses.”