Have you ever thought how do search engines generate lists of relevant links?

Although this is the result of two most powerful thing i.e., artificial engineering and crowdsourcing.

Computer algorithms define the relationship between the words and the web pages is based on the frequency of linguistic connections in a large number of texts on which the system has been trained.

A professional annotator then strengthens this relationship to hand-tuned results and the algorithms that generate them. Additionally, it based on visitors. Visitor’s visits tell the algorithms which connections are the best ones.

But there are some flaws in this system. The results are often not as smart as we’d like them to be. Sometimes, they copy and extend the inclinations installed in our ventures.

Thinking about the flaws, scientists at the University of Texas combining AI with the insights of annotators and the information encoded in domain-specific resources. Through this, they are thinking to bring a new approach to information retrieval (IR) systems. In other words, their approach is to bring new advancement to search engine i.e., future of search engines.

Read On: On-Page SEO Techniques To Rank on First Page

Matthew Lease, an associate professor in the School of Information at The University of Texas at Austin said, “An important challenge in natural language processing is accurately finding important information contained in free-text, which lets us extract it into databases and combine it with other data in order to make more intelligent decisions and new discoveries. We’ve been using crowdsourcing to annotate medical and news articles at scale so that our intelligent systems will be able to more accurately find the key information contained in each article.”

Scientists found that this method can efficiently train a neural network. It could also precisely predict named entities and extract relevant information in unannotated texts.

The strategy additionally gives an evidence of every laborer’s name quality which can be exchanged amongst tasks. It is also valuable for identifying the mistake and cleverly routing tasks. In other words, it can distinguish the best individual to explain every specific content.

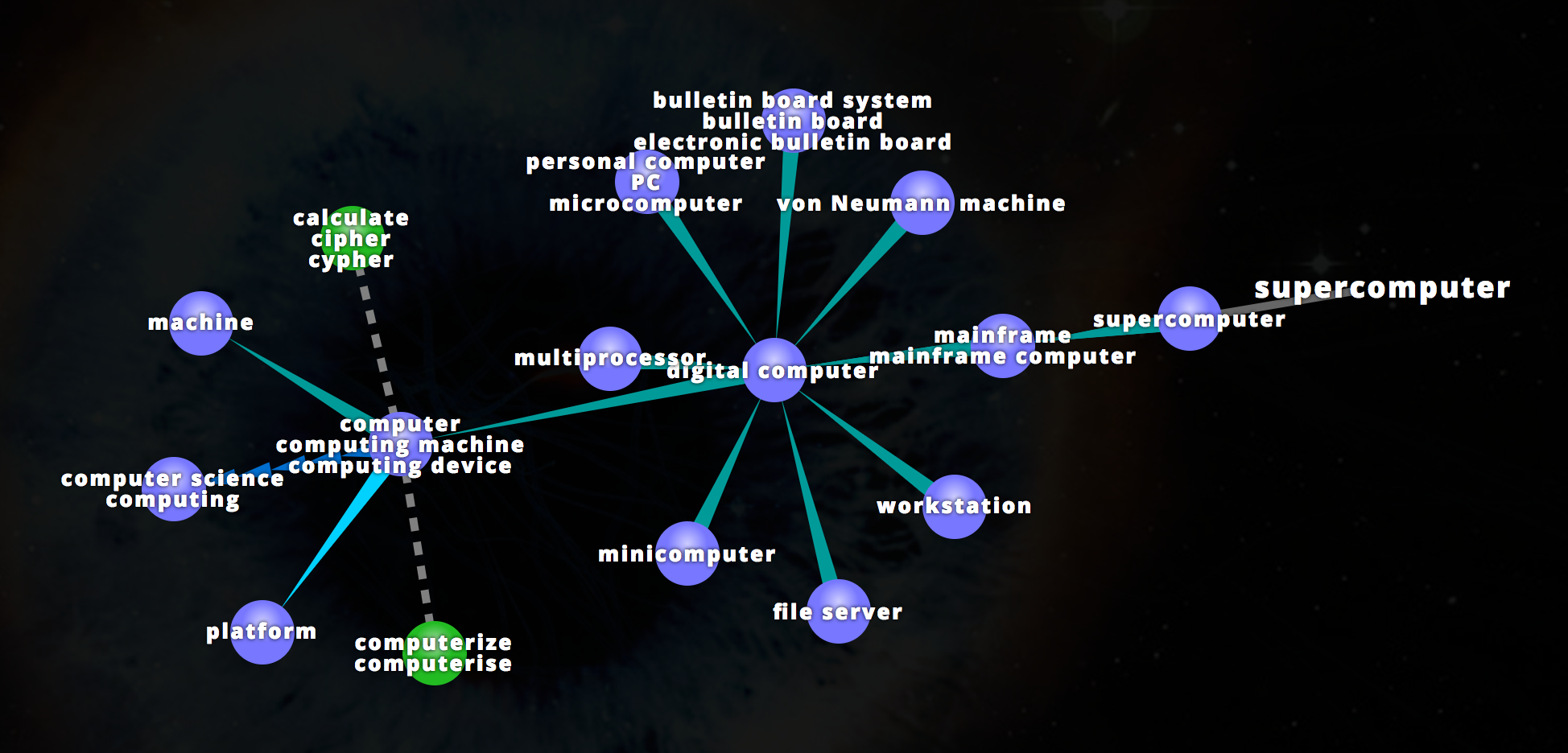

Their second model tended to the way that neural models for regular dialect handling (NLP) frequently disregard existing assets like WordNet. WordNet is a lexical database for the English language that groups words into sets of synonyms, domain-specific ontologies.

Their method involves exploiting linguistic resources via weight sharing that will improve NLP models for automatic text classification. Means, the system will classify the similar words and share them a fraction of a weight, or assigned numerical value.

As it constrains a number of free parameters, it can precisely increase the efficiency of the neural model.

Lease said, “Neural network models have tons of parameters and need lots of data to fit them. We had this idea that if you could somehow reason about some words being related to other words a priori, then instead of having to have a parameter for each one of those words separately, you could tie together the parameters across multiple words and in that way need fewer data to learn the model. It would realize the benefits of deep learning without large data constraints.”

During the experiment, they connected a type of weight sharing to a notion examination of motion picture audits and to a biomedical hunt identified with sickliness. Their approach reliably enhanced execution on grouping errands contrasted with methodologies that did not abuse weight sharing.

Due to this, the system provides a general framework for codifying. It also exploitsneural network models.

Lease said, “Training neural computing models for big data takes a lot of computing time. That’s where TACC fits in as a wonderful resource, not only because of the great storage that’s available, but also a large number of nodes and the high processing speeds available for training neural models.”

“Though many deep learning libraries have been highly optimized for processing on GPUs, there’s reason to think that these other architectures will be faster in the long term once they’ve been optimized as well.”

Niall Gaffney, Director of Data Intensive Computing at TACC said, “With the introduction of Stampede2 and its many core infrastructure, we are glad to see more optimization of CPU-based machine learning frameworks. A project like Matt’s shows the power of machine learning in both measured and simulated data analysis.”

Likewise, Gaffney, many other scientists believe that improving core natural language processing technologies for automatic information extraction and the classification of texts will continuously improve web search engines.