Today’s computer chips consist of 3 or 4 different levels of cache that allows using chip memory with higher efficiency. Each level of cache is more capacious but slower than the last. Their sizes also represent a compromise between the needs of different type of programs.

Scientists at MIT’s Computer Science and Artificial Intelligence Laboratory have designed a system that reallocates cache access on the fly. Through this system, we can create new “cache hierarchies” tailored to the needs of particular programs.

While testing on a simulation of a chip with 36 cores, scientists found that the system increases processing speed by 20 to 30 percent. It also reduces energy consumption by 30 to 85 percent.

Daniel Sanchez, an assistant professor in the Department of Electrical Engineering and Computer Science (EECS) said, “What you would like is to take these distributed physical memory resources and build application-specific hierarchies that maximize the performance for your particular application.”

“And that depends on many things in the application. What’s the size of the data it accesses? Does it have hierarchical reuse, so that it would benefit from a hierarchy of progressively larger memories? Or is it scanning through a data structure, so we’d be better off having a single but very large level? How often does it access data? How much would its performance suffer if we just let data drop to main memory? There are all these different tradeoffs.”

Scientists named their system, that allows using chip memory in a more efficient way, as ‘Jenga‘. They presented it at the International Symposium on Computer Architecture last week.

Todays’ desktop computers consist of chips with four cores. But several major chipmakers have announced plans to move to six cores in the next year or so, and 16-core processors are not uncommon in high-end servers.

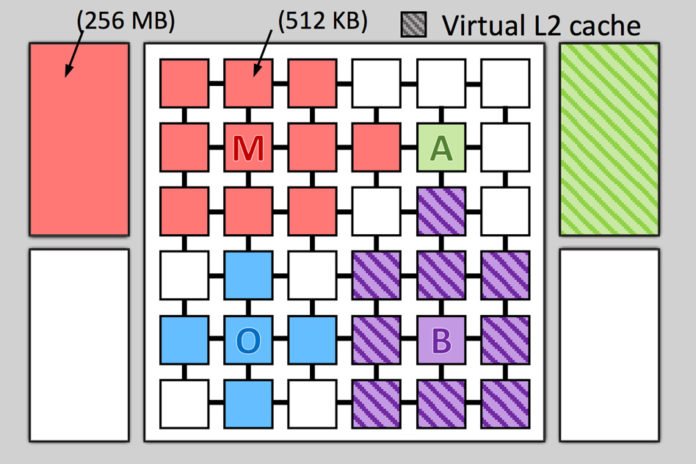

The core in every multicore chip usually has two levels of private cache. Every core shares a third cache, which is actually broken up into discrete memory banks scattered around the chip. Some new chips also include a so-called DRAM cache, which is etched into a second chip that is mounted on top of the first.

According to that for a given core, accessing the nearest memory bank of the shared cache is more efficient than accessing more distant cores. The system ‘Jenga’ classifies the physical locations of the separate memory banks, which make up the shared cache.

In real, ‘Jenga’ is built from earlier system Jigsaw. Jigsaw didn’t build cache hierarchies, which makes the allocation problem much more complex.

In the case of Jenga, it evaluates the tradeoff between latency and space for two layers of cache simultaneously. Thus, it turns the two-dimensional latency-space curve into a three-dimensional fairly smooth surface.

Scientists also developed a clever sampling algorithm for the problem of cache allocation. The algorithm systematically increases the distances between sampled points.

Once it understands the shape of the surface, the system finds the path across it that minimizes latency. It then extracts the component of that path contributed by the first level of cache, which is a 2-D curve. At that point, it can reuse Jigsaw’s space-allocation machinery.

Po-An Tsai, a graduate student in EECS at MIT said, “The insight here is that cache with similar capacities, 100 megabytes, and 101 megabytes usually have similar performance.”

“The approach yielded an aggregate space allocation that was, on average, within 1 percent of that produced by a full-blown analysis of the 3-D surface, which would be prohibitively time-consuming. Adopting the computational short cut enables Jenga to update its memory allocations every 100 milliseconds, to accommodate changes in programs’ memory-access patterns.”

Along with the efficiency of using chip memory, Jenga also features a data-placement procedure without having any bandwidth limitations.

David Wood, a professor of computer science at the University of Wisconsin at Madison said, “There’s been a lot of work over the years on the right way to design a cache hierarchy. There have been a number of previous schemes that tried to do some kind of dynamic creation of the hierarchy.”

“Jenga is different in that it really uses the software to try to characterize what the workload is and then do an optimal allocation of the resources between the competing processes. And that, I think, is fundamentally more powerful than what people have been doing before. That’s why I think it’s really interesting.”