Scuba divers and snorkelers spend vacations visiting exotic coastal locations to see vibrant coral ecosystems and to analyze the health of the reefs that are so basic to fisheries, tourism and flourishing sea biological systems.

In order to see clearly through the moving water to image reefs, a NASA scientist Enter Ved Chirayath of NASA Ames Research Center in Silicon Valley, California has developed a new hardware and software technique called fluid lensing.

Fluid lensing software strips away that contortion so specialists can without much of a stretch see corals at centimeter determination. These picture information can be utilized to recognize spreading from mounding coral writes and solid coral from those that are wiped out or kicking the bucket. They can likewise be utilized to recognize sandy or rough material.

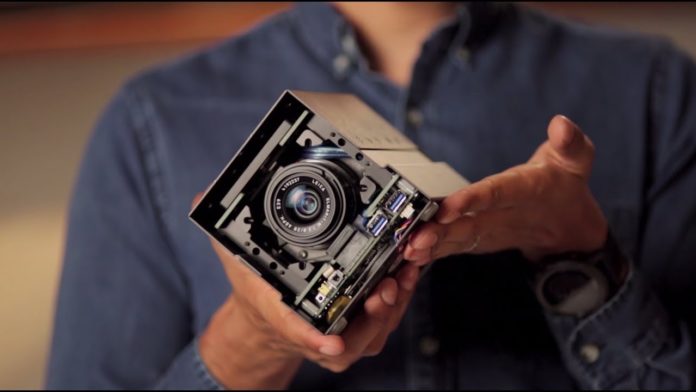

The software is installed within the instrument called Fluid Cam that can be flown on a drone. Scientists expecting that the device could one-day will fly on an orbiting spacecraft to gather image data on the world’s reefs.

Chirayath said, “Imagine you’re looking at something sitting at the bottom of a swimming pool. If no swimmers are around and the water is still, you can easily see it. But if someone dives in the water and makes waves, that object becomes distorted. You can’t easily distinguish its size or shape.”

“Ocean waves do the same thing, even in the clearest of tropical waters. Through this camera technology, you can assess coral over an entire region or see how reefs are faring on a global scale.”

In order to look for specific coral attributes, Chirayath’s team is cataloging the data they’ve collected and are adding it to a database to train a supercomputer to rapidly sort the data into known types – a process called machine learning. Because of the technology developments in both the tools to collect the data and the machine learning techniques to rapidly assess the data, coral researchers are a step closer to having more Earth observations to help them understand our planet’s reefs.