Human-computer interaction is mainly realized through a mouse, keyboard, remote control, and touch screen. However, actual interpersonal communication is primarily performed through a more natural and intuitive noncontact manner, such as sound and physical movements, which are considered to be flexible and efficient.

Researchers have been attempting to develop machines that recognize human intentions through noncontact communication modes such as sound, facial expressions, body language,and gestures that humans use.

Among these modes, hand gestures are an important part of human language. Therefore, the development of hand gesture recognition affects the nature and flexibility of human-computer interaction.

Recent progress in camera systems, image analysis, and machine learning have made optical-based gesture recognition a more attractive option in most contexts than approaches relying on wearable sensors or data gloves, as used by Anderton in Minority Report. However, current methods are hindered by various limitations, including high computational complexity, low speed, poor accuracy, or a low number of recognizable gestures.

To tackle these issues, a team led by Zhiyi Yu of Sun Yat-sen University, China, recently developed a new hand gesture recognition algorithm that strikes a good balance between complexity, accuracy, and applicability. As detailed in their paper, which was published in the Journal of Electronic Imaging, the team adopted innovative strategies to overcome key challenges and realize an algorithm that can be easily applied to consumer-level devices.

One of the main features of the algorithm is adaptability to different hand types. The algorithm first tries to classify the hand type of the user as either slim, normal, or broad-based on three measurements accounting for relationships between palm width, palm length, and finger length. If this classification is successful, subsequent steps in the hand gesture recognition process only compare the input gesture with stored samples of the same hand type. “Traditional simple algorithms tend to suffer from low recognition rates because they cannot cope with different hand types. By first classifying the input gesture by hand type and then using sample libraries that match this type, we can improve the overall recognition rate with almost negligible resource consumption,” explains Yu.

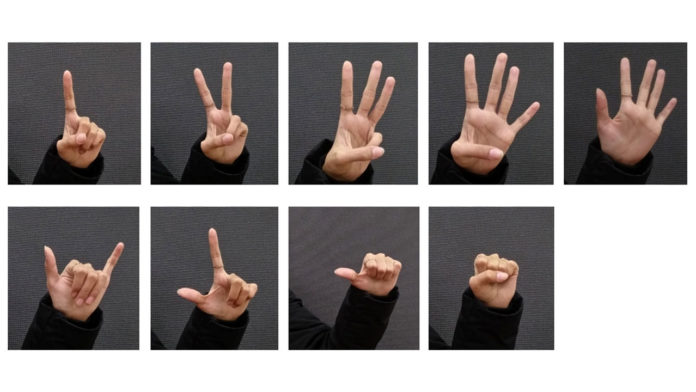

Another key aspect of the team’s method is using a “shortcut feature” to perform a pre-recognition step. While the recognition algorithm is capable of identifying an input gesture out of nine possible gestures, comparing all the features of the input gesture with those of the stored samples for all possible gestures would be very time-consuming. To solve this problem, the pre-recognition step calculates a ratio of the area of the hand to select the three most likely gestures of the possible nine. This simple feature is enough to narrow down the number of candidate gestures to three, out of which the final gesture is decided using a much more complex and high-precision feature extraction based on “Hu invariant moments.” Yu says, “The gesture prerecognition step not only reduces the number of calculations and hardware resources required but also improves recognition speed without compromising accuracy.”

The team tested their algorithm in a commercial PC processor and an FPGA platform using a USB camera. They had 40 volunteers make the nine hand gestures multiple times to build up the sample library and another 40 volunteers to determine the system’s accuracy. Overall, the results showed that the proposed approach could recognize hand gestures in real-time with an accuracy exceeding 93%, even if the input gesture images were rotated, translated, or scaled. According to the researchers, future work will focus on improving the algorithm’s performance under poor lighting conditions and increasing the number of possible gestures.

“However, the proposed algorithm has some limitations. Although the experiment can cope with a complex background, a relatively stable illumination condition is required for effective recognition. In addition, the number of recognized gestures is relatively small. Future work will focus on solving lighting effects and identifying a larger number of gestures.” Study quotes.

Gesture recognition has many promising fields of application and could pave the way to new ways of controlling electronic devices. A revolution in human-computer interaction might be close at hand.

Journal Reference

- Qiang Zhang et al., “Hand gesture recognition algorithm combining hand-type adaptive algorithm and effective-area ratio for efficient edge computing,” J. Electron. Imag. 30(6), 036026 (2021) DOI: 10.1117/1.JEI.30.6.063026