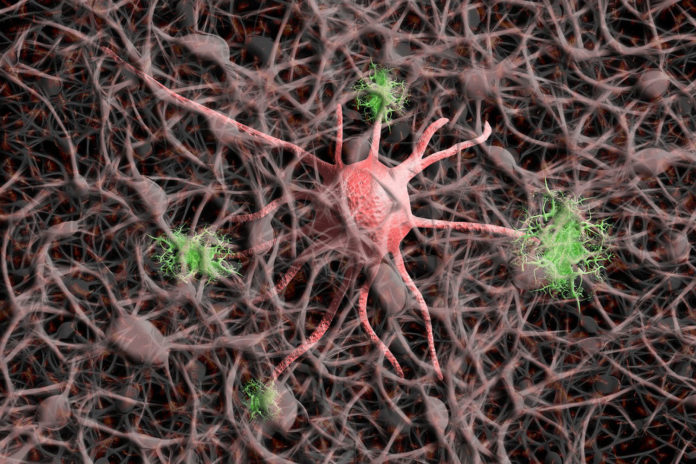

To successfully interact with the environment, the brain must transmit the sensory inputs to elicit specific movements at specific times. Such sensory-to-motor transformations are critically mediated by sensorimotor processing in the brain.

In this sensorimotor processing network, individual neurons represent sensory, cognitive, and movement-related information. But, determining how their neurons interact between seeing and acting is a complex task.

A new study by the Cognition and Sensorimotor Integration Lab at the University of Pittsburgh Swanson School of Engineering has untangled these mixed neural signals. The team has uncovered how neurons encode and decode that information and differentiate between motor and sensory signals.

They discovered a reliable temporal pattern in the neuron activity that was tied to movement, but we could also replicate it with microstimulation.

Scientists studied decoding mechanisms when the signals lead to movement, differentiating it from how information is encoded during visual processing.

Neeraj Gandhi, professor of bioengineering who leads the Cognition and Sensorimotor Integration Lab at Pitt, said, “The same groups of neurons can communicate information about sensations and movement, and the brain knows which signal is which. We found it’s as if groups of neurons encode the same information in one ‘language’ to send messages about sensation and in another ‘language’ to send information about the movement.”

“The receiving groups of neurons only act on one of the languages — that’s the key.”

For this study, scientists used microstimulation to pinpoint the encoding and decoding process. They also repeated the pattern of neural activity in non-human primate brains and elicited the intended motor reaction.

These findings offer new insights into the long-standing debate on motor preparation and generation by situating the movement gating signal in temporal activity features in shared neural substrates. It could have significant implications in brain-computer interfaces and neuroprosthetics.

Jagadish said, “For neuroprosthetics, this research could create a way to put the brakes on and inhibit response when you don’t need it, and release when needed, all based on neuron chatter. Current technology is just delivering a pulse every few milliseconds. If you can control the time when each pulse is delivered, you can select the patterned microstimulation to achieve the effect that you want.”

Journal Reference:

- Uday K. Jagadisan, Neeraj J. Gandhi. Population temporal structure supplements the rate code during sensorimotor transformations. Current Biology, 2022; DOI: 10.1016/j.cub.2022.01.015