Like many historical developments in artificial intelligence, the widespread adoption of deep neural networks (DNNs) was enabled in part by synergistic hardware. In 2012, building on earlier works, Krizhevsky et al. showed that the backpropagation algorithm could be efficiently executed with graphics-processing units to train large DNNs for image classification. Since 2012, the computational requirements of DNN models have grown rapidly, outpacing Moore’s law. Now, DNNs are increasingly limited by hardware energy efficiency.

The emerging DNN energy problem has inspired special-purpose hardware: DNN ‘accelerators’, most of which are based on direct mathematical isomorphism between the hardware physics and the mathematical operations in DNNs. Several accelerator proposals use physical systems beyond conventional electronics, such as optics and analogue electronic crossbar arrays. Most devices target the inference phase of deep learning, which accounts for up to 90% of the energy costs of deep learning in commercial deployments, although, increasingly, devices are also addressing the training phase.

However, implementing trained mathematical transformations by designing hardware for strict, operation-by-operation mathematical isomorphism is not the only way to perform efficient machine learning. Instead, training the hardware’s physical transformations directly to perform desired computations.

Cornell researchers have found a way to train physical systems, ranging from computer speakers and lasers to simple electronic circuits, to perform machine-learning computations, such as identifying handwritten numbers and spoken vowel sounds.

By turning these physical systems into the same kind of neural networks that drive services like Google Translate and online searches, the researchers have demonstrated an early but viable alternative to conventional electronic processors – one with the potential to be orders of magnitude faster and more energy-efficient than the power-gobbling chips in data centers and server farms that support many artificial-intelligence applications.

“Many different physical systems have enough complexity in them that they can perform a large range of computations,” said Peter McMahon, assistant professor of applied and engineering physics in the College of Engineering, who led the project. “The systems we performed our demonstrations with look nothing like each other, and they seem to having nothing to do with handwritten-digit recognition or vowel classification, and yet you can train them to do it.”

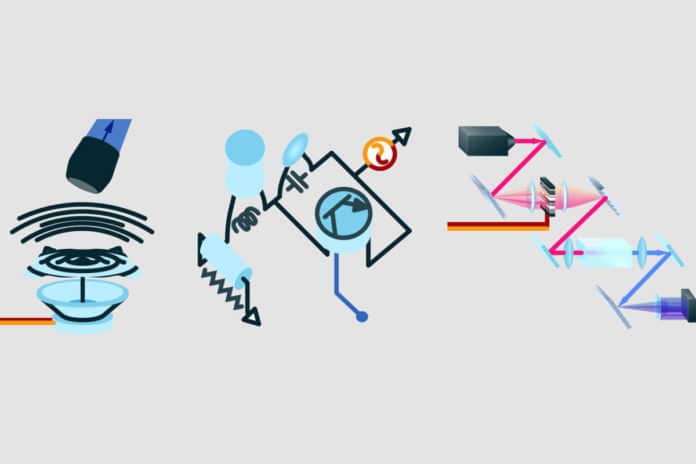

For this project, researchers focused on one type of computation: machine learning. The goal was to find out how to use different physical systems to perform machine learning in a generic way that could be applied to any system. The researchers developed a training procedure that enabled demonstrations with three diverse types of physical systems – mechanical, optical, and electrical. All it required was a bit of tweaking and a suspension of disbelief.

“Artificial neural networks work mathematically by applying a series of parameterized functions to input data. The dynamics of a physical system can also be thought of as applying a function to data input to that physical system,” McMahon said. “This mathematical connection between neural networks and physics is, in some sense, what makes our approach possible, even though the notion of making neural networks out of unusual physical systems might at first sound really ridiculous.”

The researchers placed a titanium plate atop a commercially available speaker for the mechanical system, creating what is known in physics as a driven multimode mechanical oscillator. The optical system consisted of a laser beam through a nonlinear crystal that converted the colors of incoming light into new colors by combining pairs of photons. The third experiment used a small electronic circuit with just four components – a resistor, a capacitor, an inductor, and a transistor – of the sort a middle-school student might assemble in science class.

In each experiment, the pixels of an image of a handwritten number were encoded in a pulse of light or an electrical voltage fed into the system. The system processed the information and gave its output in a similar optical pulse or voltage type. Crucially, they had to be trained for the systems to perform the appropriate processing. So the researchers changed specific input parameters and ran multiple samples – such as different numbers in different handwriting – through the physical system, then used a laptop computer to determine how the parameters should be adjusted to achieve the greatest accuracy for the task. This hybrid approach leveraged the standard training algorithm from conventional artificial neural networks, called backpropagation, in a way that is resilient to noise and experimental imperfections.

The researchers were able to train the optical system to classify handwritten numbers with an accuracy of 97%. While this accuracy is below the state-of-the-art for conventional neural networks running on a standard electronic processor, the experiment shows that even a very simple physical system, with no obvious connection to conventional neural networks, can be taught to perform machine learning and could potentially do so much faster, and using far less power, than conventional electronic neural networks.

The optical system was also successfully trained to recognize spoken vowel sounds.

The researchers have posted their Physics-Aware-Training code online so that others can turn their own physical systems into neural networks. The training algorithm is generic enough that it can be applied to almost any such system, even fluids or exotic materials, and diverse systems can be chained together to harness the most useful processing capabilities of each one.

“It turns out you can turn pretty much any physical system into a neural network,” McMahon said. “However, not every physical system will be a good neural network for every task, so there is an important question of what physical systems work best for important machine-learning tasks. But now there is a way to try find out – which is what my lab is currently pursuing.”

Journal Reference

- Logan G. Wright, Tatsuhiro Onodera, Martin M. Stein, Tianyu Wang, Darren T. Schachter, Zoey Hu & Peter L. McMahon. Deep physical neural networks trained with backpropagation. Nature 601, 549–555 (2022). DOI: 10.1038/s41586-021-04223-6