By enabling computers to perform each task intelligently, machine learning systems can carry out complex processes by learning from data. Recent years have seen exciting advances in machine learning, which have raised its capabilities across a suite of applications.

For years, Google has been using machine learning for several tasks, including Autocorrecting misspelled words or showing useful results. Moreover, they also created an individual virtual assistant called Google Assistant. Just Say ‘OK Google,’ and your assistant is ready to help you perform various tasks.

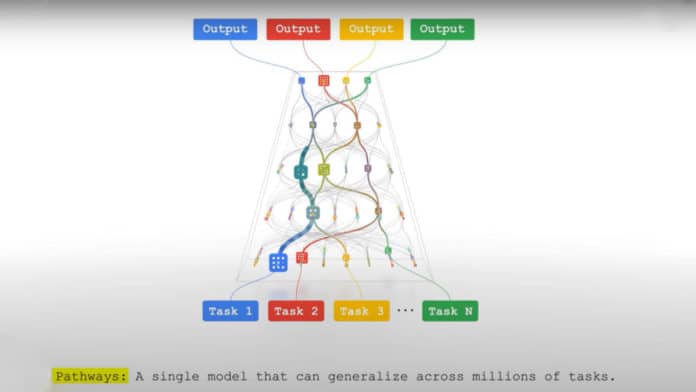

Google is continuously making progress in the World of AI. Now, Google has introduced a next-generation AI architecture called Pathways.

As their blog suggests, “Pathways is a new way of thinking about AI that addresses many of the weaknesses of existing systems and synthesizes their strengths. Pathways will enable us to train a single model to do thousands or millions of things.”

Today’s AI systems are trained from scratch for each new problem. It requires much more data to learn each new task. Plus, it is time-consuming.

Jeff Dean, Google Senior Fellow and SVP of Google Research said, “Imagine if, every time you learned a new skill (jumping rope, for example), you forgot everything you’d learned – how to balance, how to leap, how to coordinate the movement of your hands – and started learning each new skill from nothing.”

This problem may end up with Pathways architecture. Unlike other old methods, you don’t have to start from scratch every time.

The model also enables multiple senses by simultaneously allowing multimodal models encompassing vision, auditory, and language understanding. It is very insightful and less prone to mistakes and biases.

Also, it could handle more abstract forms of data, helping find useful patterns that have eluded human scientists in complex systems such as climate dynamics.

Most of the existing models are dense, which makes them inefficient. Here, dense means the whole neural network activates to accomplish a task.

Pathways will make them sparse and efficient. It means only small pathways through the network are called into action as needed.

Dean noted, “The model dynamically learns which parts of the network are good at which tasks — it learns how to route tasks through the most relevant parts of the model. A big benefit to this kind of architecture is that it not only has a larger capacity to learn a variety of tasks, but it’s also faster and much more energy-efficient because we don’t activate the entire network for every task.”