Engineers at the Rice University are developing a flat microscope implant, called FlatScope that they hope could one-day beam sensory information straight to your brain.

This new flat microscope implant has the potential to capture brain activity in much more detail than currently possible. It can also monitor and stimulate several million neurons in the cortex (our gray matter) by time.

Scientists actually wanted to scan the brain at a deep enough level to figure out the fundamentals of sensory input. And indeed, that is the key to learning how you can control those sensory inputs.

Scientists got inspiration from the advances in semiconductor manufacturing. They even are able to create extremely dense processors with billions of elements on a chip for the phone in your pocket. So why not apply these advances to neural interfaces?

In collaboration with DARPA defense agency and as part of the Neural Engineering System Design (NESD) program, scientists are now developing a fully functioning, implantable system that enables communication between the brain and the digital world.

DARPA said. “Such an interface would convert the electrochemical signaling used by neurons in the brain into the ones and zeros that constitute the language of information technology, and do so at far greater scale than is currently possible.”

“It could allow partially sighted or blind people to see the world through a camera attached to their shirt, though that’s still a long way off.”

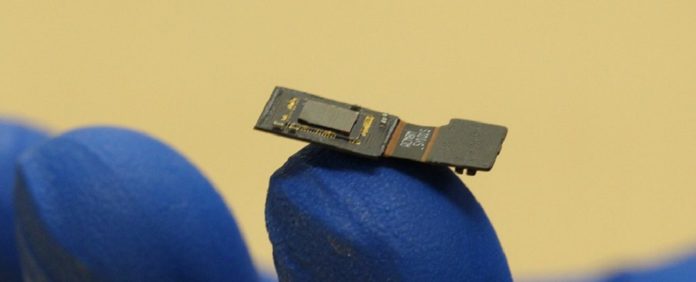

Currently, the prototype sits on top of the brain and detects optical signals from neurons in the cortex. Unlike today’s best brain monitoring systems, the FlatScope could deal in thousands when it’s ready to go.

To discover ways of getting neurons to release photons when triggered, scientists are working with bioluminescence, scientists are now working with bioluminescence.

The team is also working on the FlatCam: a super-thin, low-powered camera sensor that could be used in an implant.

Yet another challenge is putting together algorithms that can crunch through the data coming from the brain and make sense of the 3D maps of light and neuron activity being fed back.