For about the most recent ten years, analysts have been utilizing manmade brainpower strategies called machine figuring out how to translate human mind movement. Connected to neuroimaging information, these calculations can reconstitute what we see, hear, and even what we think. For instance, they demonstrate that words with comparable implications are gathered together in zones in various parts of our cerebrum.

Notwithstanding, by recording mind movement amid a straightforward undertaking—regardless of whether one hears BA or DA—neuroscientists from the University of Geneva (UNIGE), Switzerland, and the Ecole Normale supérieure (ENS) in Paris now demonstrate that the cerebrum does not really utilize the locales of the cerebrum distinguished by machine figuring out how to play out an assignment.

Most importantly, these areas mirror the psychological affiliations identified with this assignment. While machine learning is in this way viable for translating mental movement, it isn’t really powerful to understand the particular data preparing systems in the cerebrum. The outcomes are accessible in the PNAS journal.

Current neuroscientific information procedures have as of late featured how the cerebrum spatially sorts out the depiction of word sounds, which specialists could exactly outline district of movement. UNIGE neuroscientists in this manner asked how these spatial maps are utilized by the cerebrum itself when it performs particular errands.

“We have utilized all the accessible human neuroimagery strategies to attempt to answer this inquiry”, says Anne-Lise Giraud, a teacher at the Department of Basic Neurosciences of the UNIGE Faculty of Medicine.

A central area for choosing data

UNIGE neuroscientists had around fifty individuals tune in to a continuum of syllables running from BA to DA. The focal phonemes were extremely uncertain and it was hard to recognize the two choices. They at that point utilized a utilitarian MRI and magnetoencephalography to perceive how the mind carries on when the acoustic boost is clear, or, despite what might be expected, when it is vague and requires a dynamic mental portrayal of the phoneme and its understanding by the cerebrum.

“We have watched that paying little mind to the fact that it is so hard to order the syllable that was heard, amongst BA and DA, the choice dependably connects with a little district of the back unrivaled fleeting projection”, notes Anne-Lise Giraud.

Neuroscientists at that point twofold checked their outcomes on a patient with damage in the particular locale of the back better fleeting flap utilized than recognize BA and DA. “What’s more, in reality, despite the fact that the patient did not seem to have side effects, he was never again ready to recognize the BA and DA phonemes … this affirms this little area is essential in preparing this sort of phoneme data”, includes Sophie Bouton, a specialist from Anne-Lise Giraud’s group.

The “bogus positives” of the machine getting the hang of interpreting.

Be that as it may, is the data on the character of the syllable just locally present, as the trial of these Genevan researchers has appeared, or is it introduce all the more by and large in our mind, as recommended by the maps created by means of machine learning? To answer this inquiry, the neuroscientists repeated the BA/DA undertaking with individuals who have anodes straightforwardly embedded in their brains for restorative reasons.

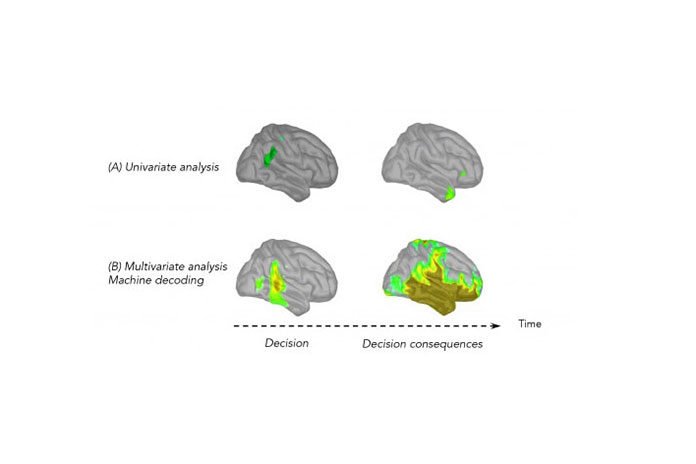

This system can gather exceptionally central neural action. A univariate investigation made it conceivable to see which locale of the mind was requested amid the errand, anode by a cathode, contact by contact. Exclusively the contacts in the back unrivaled transient flap were dynamic, accordingly affirming the aftereffects of the Geneva examine.

Notwithstanding, when a machine-learning calculation was connected to the greater part of the information, therefore making a multivariate unraveling of information conceivable, positive outcomes were seen in the whole transient flap, and even past it. “Learning calculations are shrewd however uninformed”, determines Anne-Lise Giraud.

“They are extremely touchy and utilize the greater part of the data in the signs. Be that as it may, they don’t enable us to know whether this data was utilized to play out the errand, or in the event that it mirrors the results of this undertaking—at the end of the day, spreading data in our cerebrum”, proceeds with Valérian Chambon, specialist at the Departement d’études cognitive at the ENS.

The mapped locales outside of the back predominant worldly projection are therefore false positives, as it were. These locales hold data on the choice that the subject makes (BA or DA), however, aren’t requested to play out this assignment.

This exploration offers a superior comprehension of how our mind depicts syllables and, by demonstrating the points of confinement of manmade brainpower in certain examination settings, cultivates welcome reflection on the best way to translate information delivered by machine learning calculations.