It’s no secret that algorithms run the world, powering everything from Google’s search results to Uber’s car-pool capabilities. But under the hood of the world’s computing infrastructure are a more fundamental set of algorithms that haven’t previously been analyzed with respect to where they’ve been created.

A new MIT-led study reveals that many of these parts have been made in America– some by native-born Americans but increasingly also by immigrants working at its institutions.

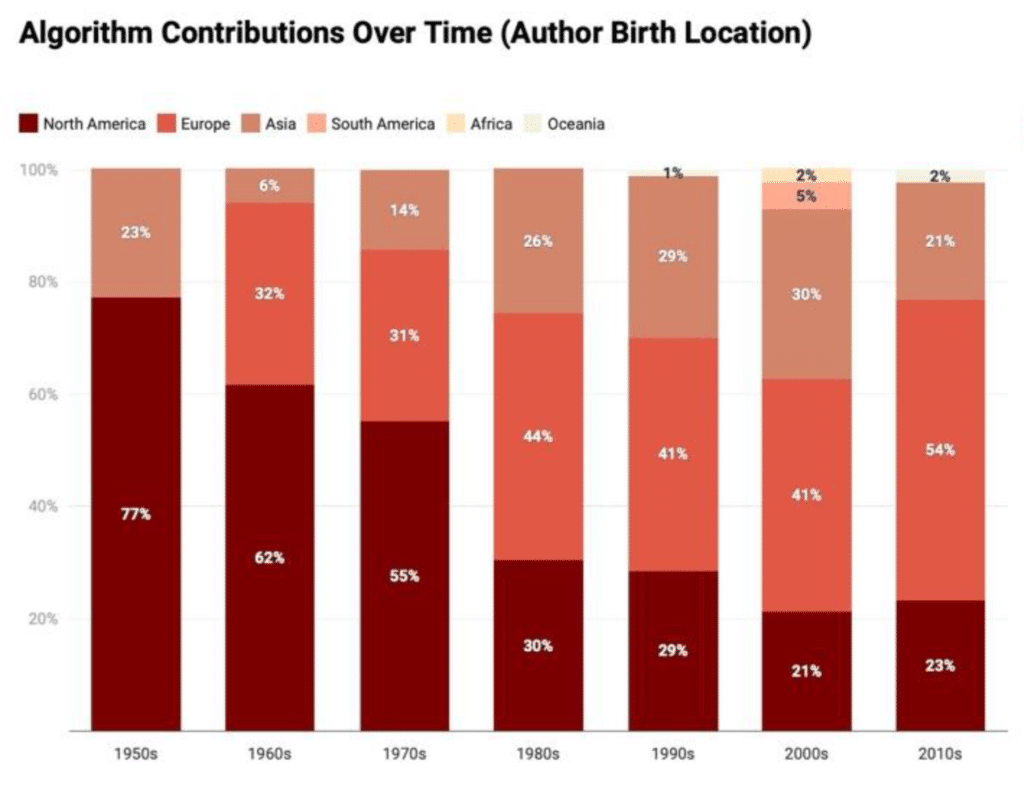

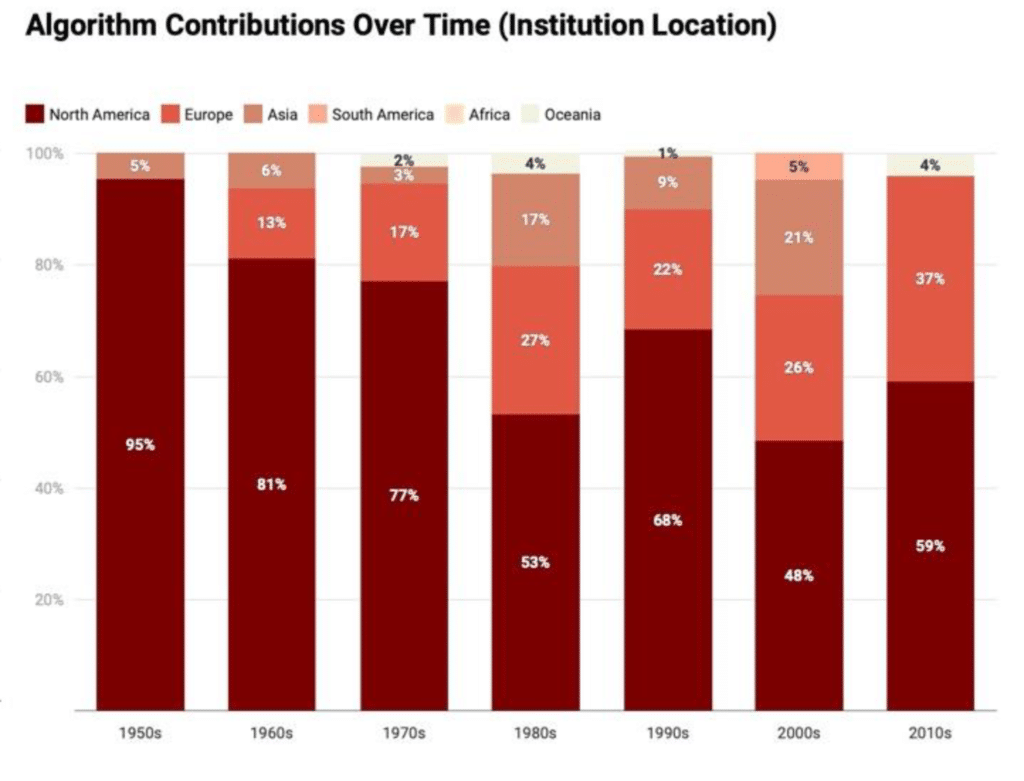

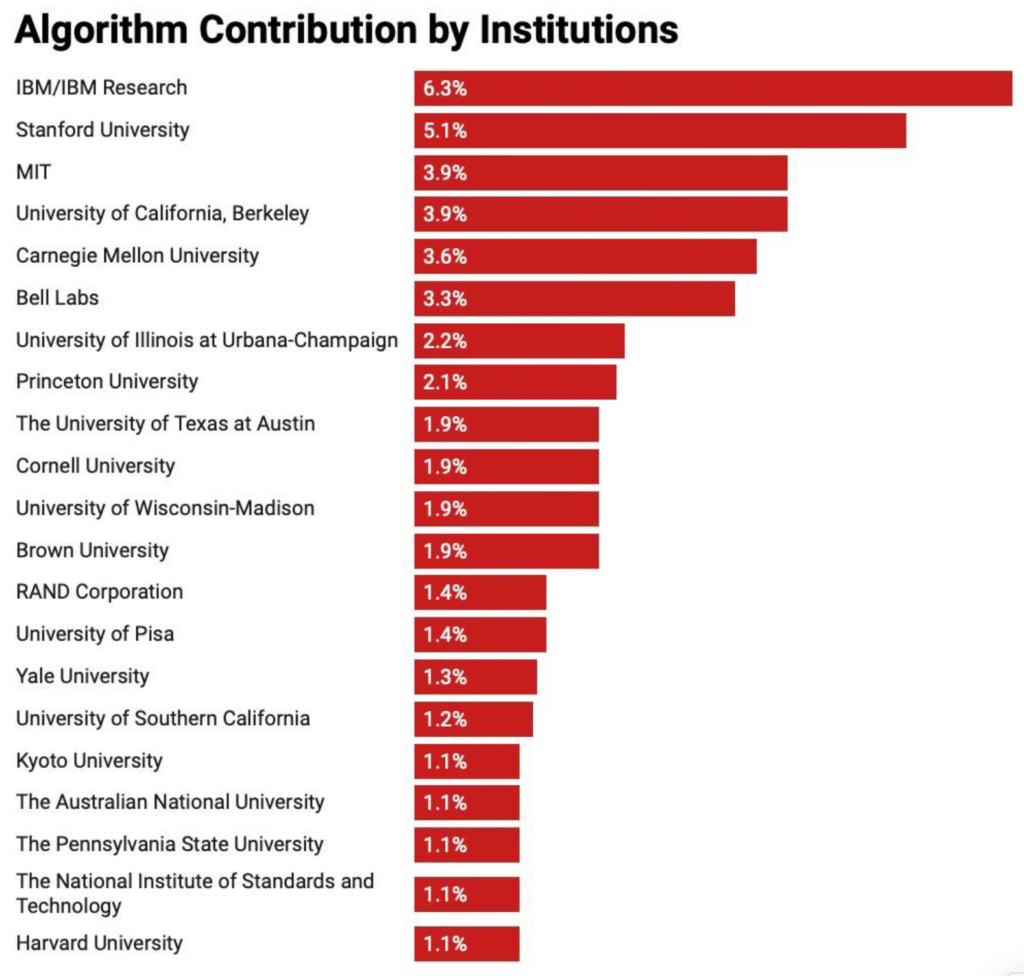

By analyzing improvements over 70 years in the 128 most important “families” of algorithms, researchers found that roughly two-thirds of the improvements have come from researchers at North American institutions, but that in the last 30 years more than three-quarters of contributions have come from scientists originally hailing from other countries.

“If we want the United States to continue to be ground zero for computer science, we need to make sure that our policies make it easy to continue to bringhost international researchers toin our institutions,” says Thompson, a research scientist at MIT’s Computer Science and Artificial Intelligence Lab (CSAIL) and the Sloan School of Management.

The study shows that algorithmic progress to date has been disproportionately Western-centric. Despite residents of North America and Europe making up only 15 percent of the world population, they have contributed more than three-quarters of the algorithms. The driving factor seems to be how wealthy a country is: GDP was found to be more important to producing important algorithms than a country’s actual population size.

(On average, a $10,000 bump in GDP per capita produced a bigger jump in algorithm contributions than a population increase of 100 million people.)

“There’s a danger that algorithm development may suffer from the problem of the ‘lost Einsteins,’ where those with natural talent in under-developed countries are unable to reach their full potential because of a lack of opportunity,” says Thompson.

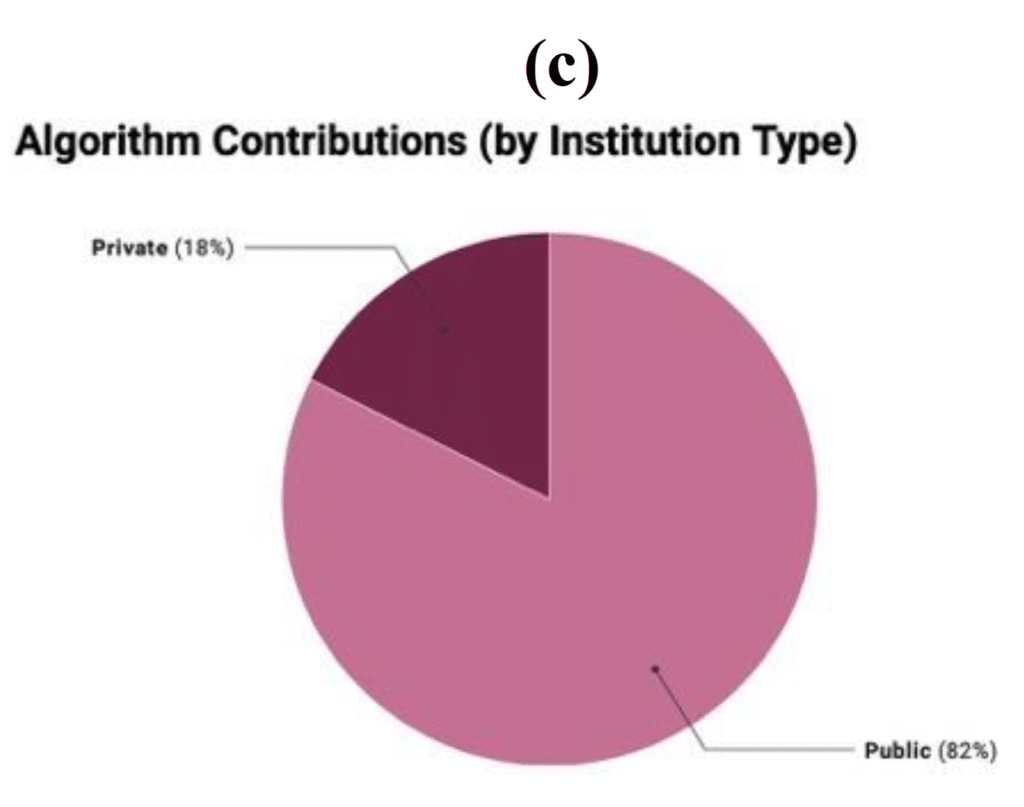

Another key finding highlighted the importance of federal funding for university research: 82 percent of the influential algorithms came from the work of nonprofits and public-facing institutions like universities, as opposed to private companies.

“Generally speaking, giving money to public institutions means you’re more likely to get a public benefit,” says Thompson, who co-wrote the study with visiting Georgia Tech student research assistant Yash Sherry and former CSAIL researcher Shuning Ge.

To develop the dataset, the team first poured over more than 1,000 research papers and 50 textbooks to create a list of about 300 algorithms that were either the first to solve particular problems, or improved state-of-the-art methods. These included everything from better list-sorting to the infamous “traveling-salesperson” problem, where the goal is to find the quickest route across multiple cities.

From the team’s 300 algorithms, they ultimately analyzed a subset of 180 that could be sourced for information about authors and institutions. Collectively, the researchers refer to this set of fundamental algorithms as “The Algorithmic Commons” – because, like the Digital Commons, it represents advancements in knowledge whose benefits, they believe, can be widely shared.

“The great thing about algorithm improvement is that you get more output without having to put in more resources,” says Thompson. “Just as a productivity improvement for a business allows them to produce more output for a given set of inputs, an algorithmic improvement allows a computer to tackle bigger, harder problems for the same computational budget.”

The project coincides with ongoing work from Thompson and Sherry showing that improvements in algorithms have often rivaled and even exceeded the decades-long improvements in computer hardware that have come from Moore’s Law.

Journal Reference

- Thompson NC, Ge S, Sherry YM. Building the algorithm commons: Who discovered the algorithms that underpin computing in the modern enterprise? Global Strategy Journal. 2020;1–17. DOI: 10.1002/gsj.1393